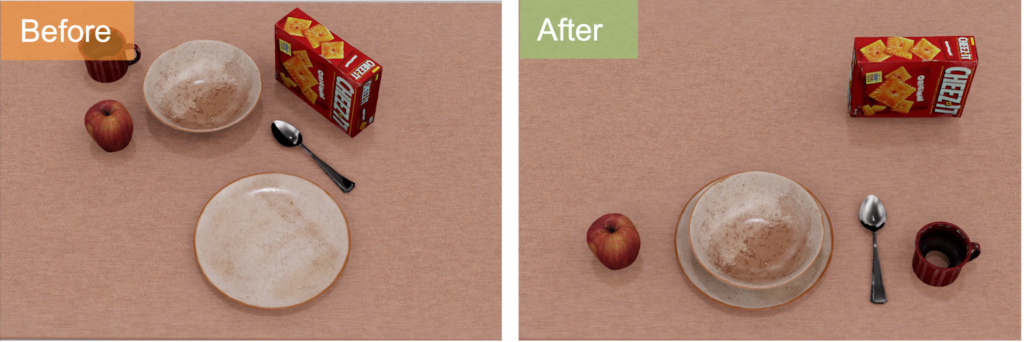

Imagine this, you walk into your kitchen and say to your home assistant, “Set up the table for breakfast!” Without any further instructions, your robot lays out the plates, utensils, and food items in ways that meet the dining conventions, creating an aesthetically pleasing setting—just as you had in mind.

Instruction: “Set up a table for breakfast.”

For these robots to be truly helpful, they need to understand and act upon natural human instructions without exhaustive programming. This capability would make robots accessible to everyone, not just those with technical expertise, and would greatly enhance their usefulness in everyday life. Our research aims to bridge this gap by developing a system that allows robots to interpret and execute under-specified, natural language instructions for functional object arrangement (FORM).

Set It Up:

Y. Xu, J. Mao, Y. Du, T. Lozano-Perez, L.P. Kaelbling, and D. Hsu. “Set it up!”: Functional object arrangement with compositional generative models. In Proc. Robotics: Science & Systems, 2024.

PDF | Website

We present “Set It Up”, a neuro-symbolic framework that learns to specify the goal poses of objects from a few training examples and a structured natural-language task specification. Set It Up uses a grounding graph, which is composed of abstract spatial relations among objects, (e.g., left-of), as its intermediate representation. This decomposes the FORM problem into two stages: (i) predicting this graph among objects and (ii) predicting object poses given the grounding graph. For (i), Set It Up leverages large language models (LLMs) to induce Python programs from a few training examples and a task specification. This program can be executed to generate grounding graphs in novel scenarios. For (ii), Set It Up pre-trains a collection of diffusion models to capture primitive spatial relations and online