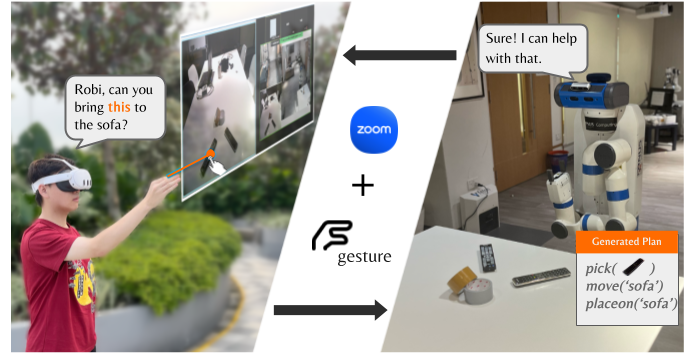

Imagine a future where distance no longer constrains our ability to manage household tasks. Picture a robot assistant capable of remotely interpreting spoken commands and gestures to check your refrigerator or reheat a meal before you get home. Such a robotic system would fundamentally change the way we interact with our homes, bringing a new level of convenience and efficiency to daily life. In this project, we introduce Robi Butler, a multimodal interaction system that enables seamless communication between remote users and household robots to execute various household tasks.

Robi Butler:

A. Xiao, N. Janaka, T. Hu, A. Gupta, K. Li, C. Yu, and D. Hsu. Robi Butler: Remote multimodal interactions with household robot assistant. In IEEE Int. Conf. on Robotics & Automation, 2025.

PDF | Homepage

Robi Butler allows the human user to monitor its environment from a first- person view, issue voice or text commands, and specify target objects through hand-pointing gestures. At its core, a high-level behavior module, powered by Large Language Models (LLMs), interprets multimodal instructions to generate multi-step action plans. Each plan consists of open-vocabulary primitives supported by vision-language models, enabling the robot to process both textual and gestural inputs. Zoom provides a convenient interface to implement remote interactions between the human and the robot. The integration of these components allows Robi Butler to ground remote multimodal instructions in real-world home environments in a zero-shot manner. We evaluated the system on various household tasks, demonstrating its ability to execute complex user commands with multimodal inputs. We also conducted a user study to examine how multimodal interaction influences user experiences in remote human-robot interaction. These results suggest that with the advances in robot foundation models, we are moving closer to the reality of remote household robot assistants.