Our goal is to develop a formal computational framework of trust, supported by experimental evidence and predictive models of human behaviors, to enable automated decision-making for fluid collaboration between humans and robots.

Trust-POMDP

M. Chen, S. Nikolaidis, H. Soh, D. Hsu, and S. Srinivasa. Planning with trust for human-robot collaboration. In Proc. ACM/IEEE Int. Conf. on Human-Robot Interaction, 2018.

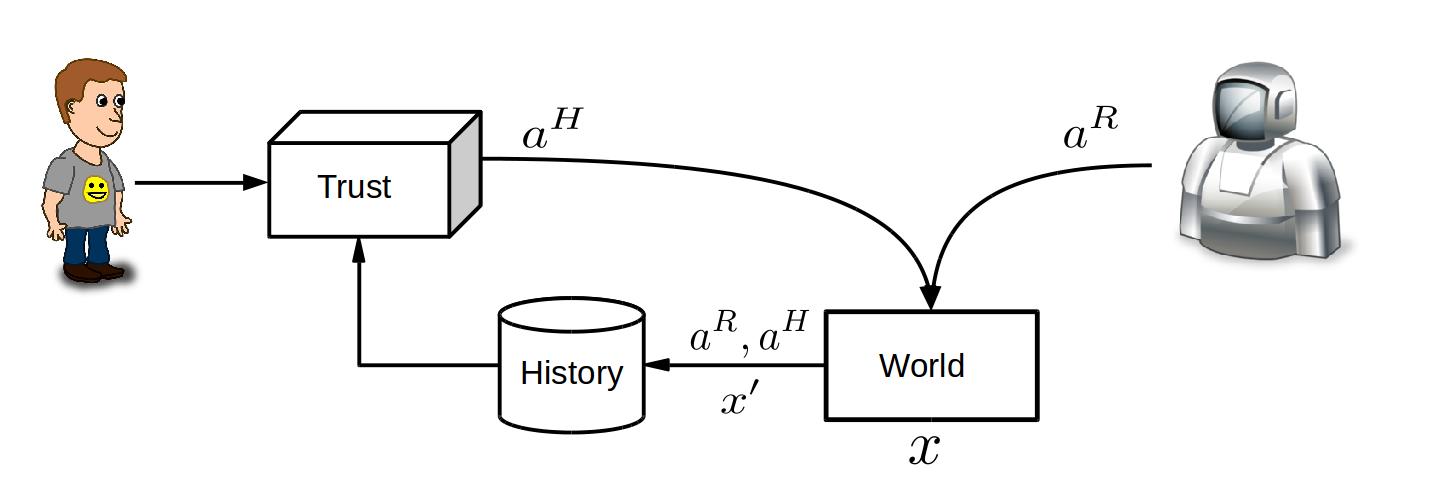

Trust is essential for human-robot collaboration and user adoption of autonomous systems, such as robot assistants. This paper introduces a computational model which integrates trust into robot decision-making. Specifically, we learn from data a partially observable Markov decision process (POMDP) with human trust as a latent variable. The trust-POMDP model provides a principled approach for the robot to (i) infer the trust of a human teammate through interaction, (ii) reason about the effect of its own actions on human behaviors, and (iii) choose actions that maximize team performance over the long term. We validated the model through

human subject experiments on a table-clearing task in simulation (201 participants) and with a real robot (20 participants). The results show that the trust-POMDP improves human-robot team performance in this task. They further suggest that maximizing trust in itself may not improve team performance.